Performance that holds up in production

QPS at 10M vectors

Built for real-time AI applications that can’t afford to wait.

recall at scale

No accuracy tradeoff as your dataset grows.

milliseconds

p99 latency for consistent performance from prototype to production.

Secure AI deployment anywhere

Discover how Actian VectorAI DB helps you build and run AI applications anywhere, without cloud dependencies. This portable, local-first vector database delivers fast, predictable retrieval while keeping your data and infrastructure fully under your control.

Cloud vector database wasn’t built for your edge case

Network latency blocks real-time applications

Cloud round-trips add 200-400ms to every query you run. You can’t build sub-100ms applications when your database contributes most of the latency.

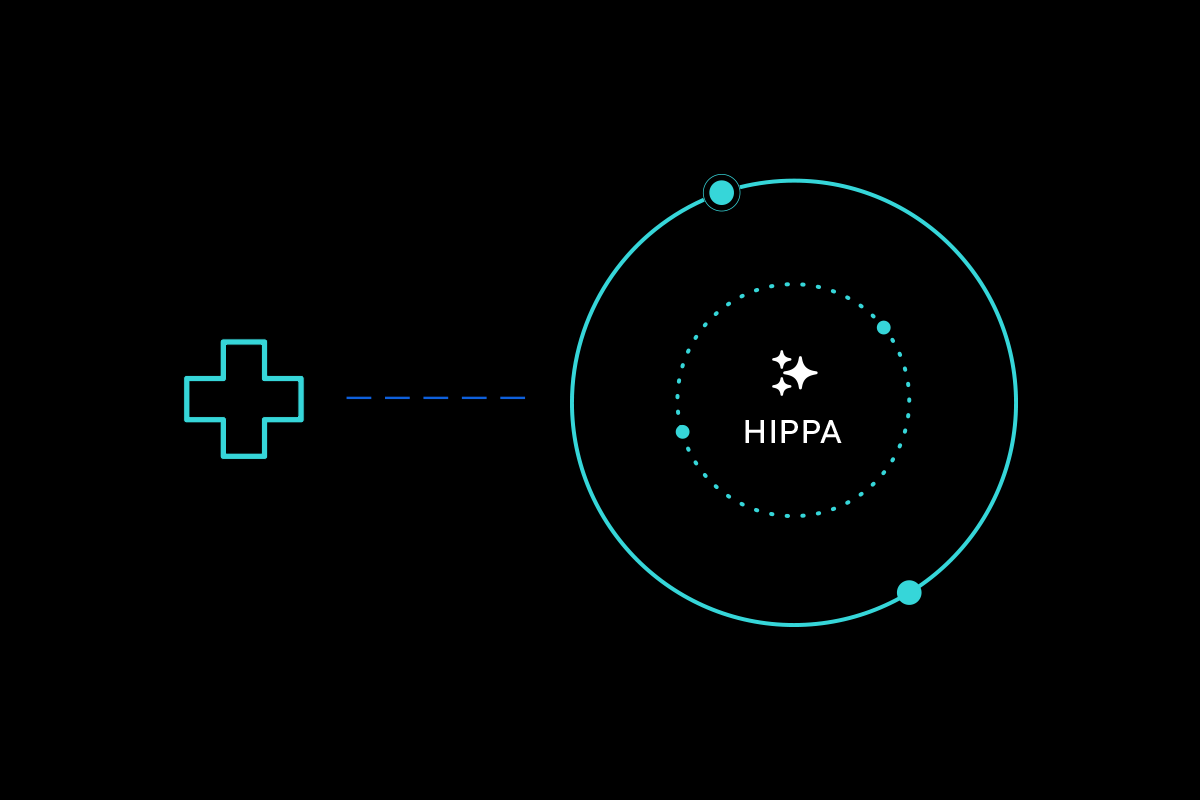

Third-party infrastructure blocks regulated deployments

HIPAA and GDPR require your data to stay within your control. Cloud services introduce third-party processing that fails your compliance requirements.

Cloud-only architecture blocks entire deployment scenarios

Your edge devices, disconnected environments, and embedded systems can’t assume reliable internet. Cloud databases leave entire classes of your AI applications unaddressed.

From install to production in minutes

Explore resources and build apps using your language of choice

- Step 1

- Step 2: Build

- Step 3: Deploy

# Install with Docker

docker pull actian/vectorai-db

docker run -d -p 50051:50051 actian/vectorai-db# Define a collection — set the vector size to match your embedding model

client.collections.create("products",

vectors_config=VectorParams(size=128, distance=Distance.Cosine))

# Insert vectors — embed() is your model (e.g. sentence-transformers, OpenAI)

client.points.upsert("products", [

PointStruct(id=1, vector=embed("wireless headphones"), payload={"name": "AirPods Pro"}),

PointStruct(id=2, vector=embed("noise cancelling buds"), payload={"name": "Sony WF-1000XM5"}),

PointStruct(id=3, vector=embed("budget earbuds"), payload={"name": "Soundcore Q20"}),

])

# Search by meaning — not keywords. Returns the closest matches by similarity.

results = client.points.search("products", vector=embed("earbuds under $50"), limit=3)

for r in results:

print(f"{r.payload['name']} — score: {r.score:.3f}")

✓ Laptop (development)

✓ Raspberry Pi (embedded)

✓ Factory edge servers (on-prem)

✓ Data center (enterprise)

✓ Cloud (optional)

One database. No re-platforming. No vendor lock-in.Built for developers at the edge

Actian VectorAI DB enables portable AI by offering:

FAQ

Actian VectorAI DB is a portable, local-first vector database built for AI systems that run beyond the cloud. It enables developers to run semantic and hybrid search close to their data, delivering low-latency, predictable retrieval across edge, on-prem, hybrid, and cloud environments.

Most vector databases are built for cloud-native deployments, while VectorAI DB is designed to run consistently across edge, on-prem, hybrid, and cloud environments. It delivers portable, local-first retrieval with predictable performance, including up to 22× higher QPS at scale on identical self-hosted hardware.

VectorAI DB supports modern Approximate Nearest Neighbor (ANN) indexing methods, including HNSW, for low-latency, high-accuracy search at scale.

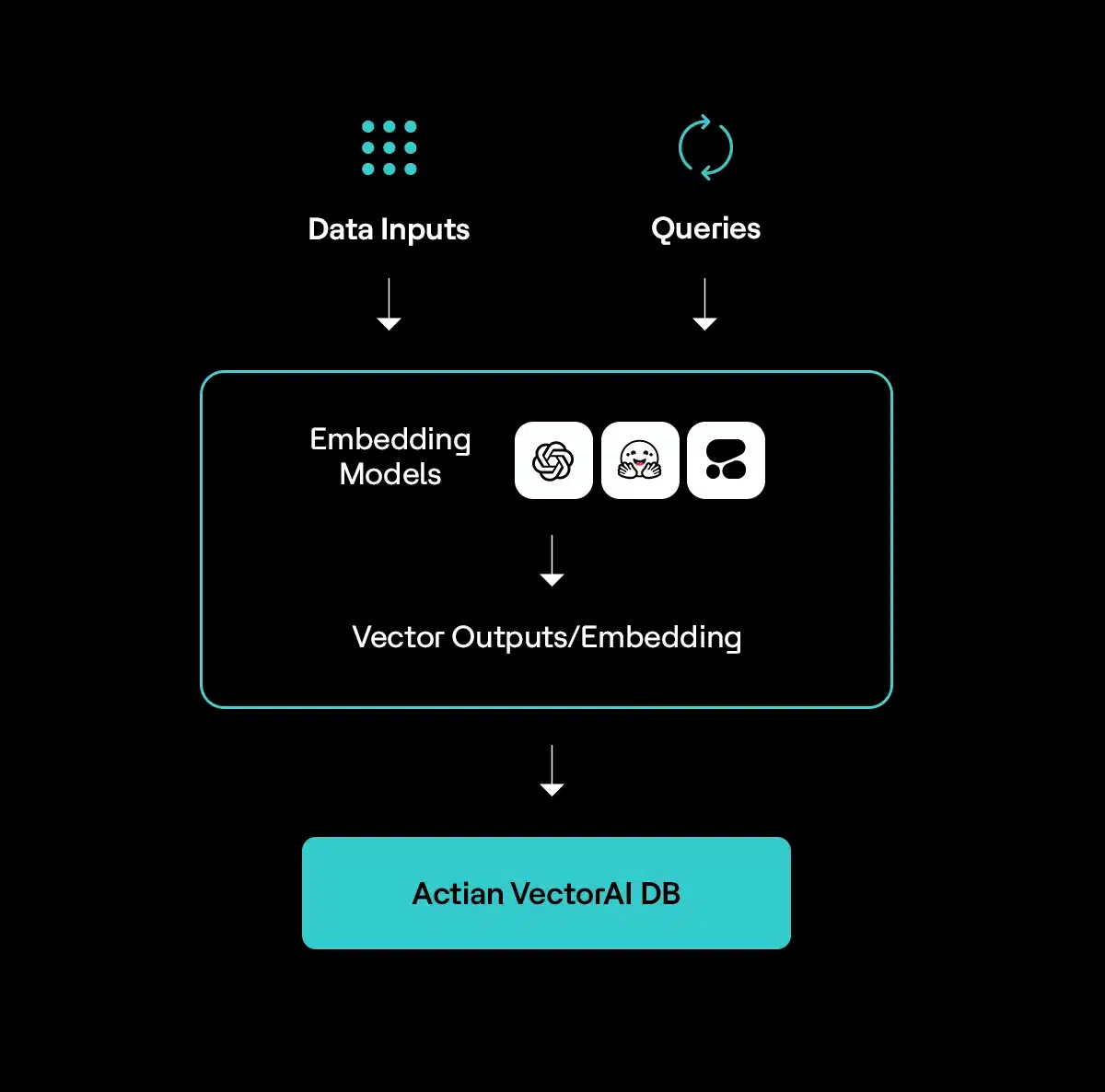

VectorAI DB is model-agnostic and works with embeddings generated by any provider or framework. This includes OpenAI, Anthropic, Cohere, open-source models like Hugging Face, and custom or fine-tuned models.

Yes. VectorAI DB can create and store vector embeddings from multimodal data sources like text, images, audio, and video.